Cloud Kitchen Delivery Performance Case Study — This case study documents how a high-volume cloud kitchen stabilized delivery performance during peak hours after implementing CKaaS (Cloud Kitchen as a Service) systems. Prior to intervention, the kitchen performed well during non-peak hours but struggled with delays, cancellations, and SLA breaches during rush periods—an issue many founders encounter as order volume grows, as discussed in Why My Cloud Kitchen Profits Are Declining.

Over a sixty-day period, the kitchen achieved consistent delivery performance during peak hours without increasing manpower, changing the menu, or introducing platform throttling. The improvement came entirely from operational systemization and execution control, similar to approaches used when Fixing Cloud Kitchen Delays, Refunds, and Complaints.

Cloud Kitchen Delivery Performance Case Study: Case Background

The kitchen operated four delivery-only brands from a single location, handling between two hundred and two hundred eighty orders per day. Peak-hour traffic between 7:00 PM and 9:30 PM accounted for more than fifty percent of daily volume. Swiggy and Zomato were the primary order sources.

While overall ratings remained between 4.0 and 4.3, delivery performance during peak hours was inconsistent. Average preparation times spiked, rider wait times increased, and delayed deliveries became a recurring customer complaint. These symptoms are common in kitchens that scale order volume before stabilising internal systems, as explained in How to Stabilise Profits Before Scaling.

Cloud Kitchen Delivery Performance Case Study: The Core Problem

The founder initially believed peak-hour delays were unavoidable and could only be fixed by adding more staff or limiting order intake. However, both options risked margin erosion or growth suppression.

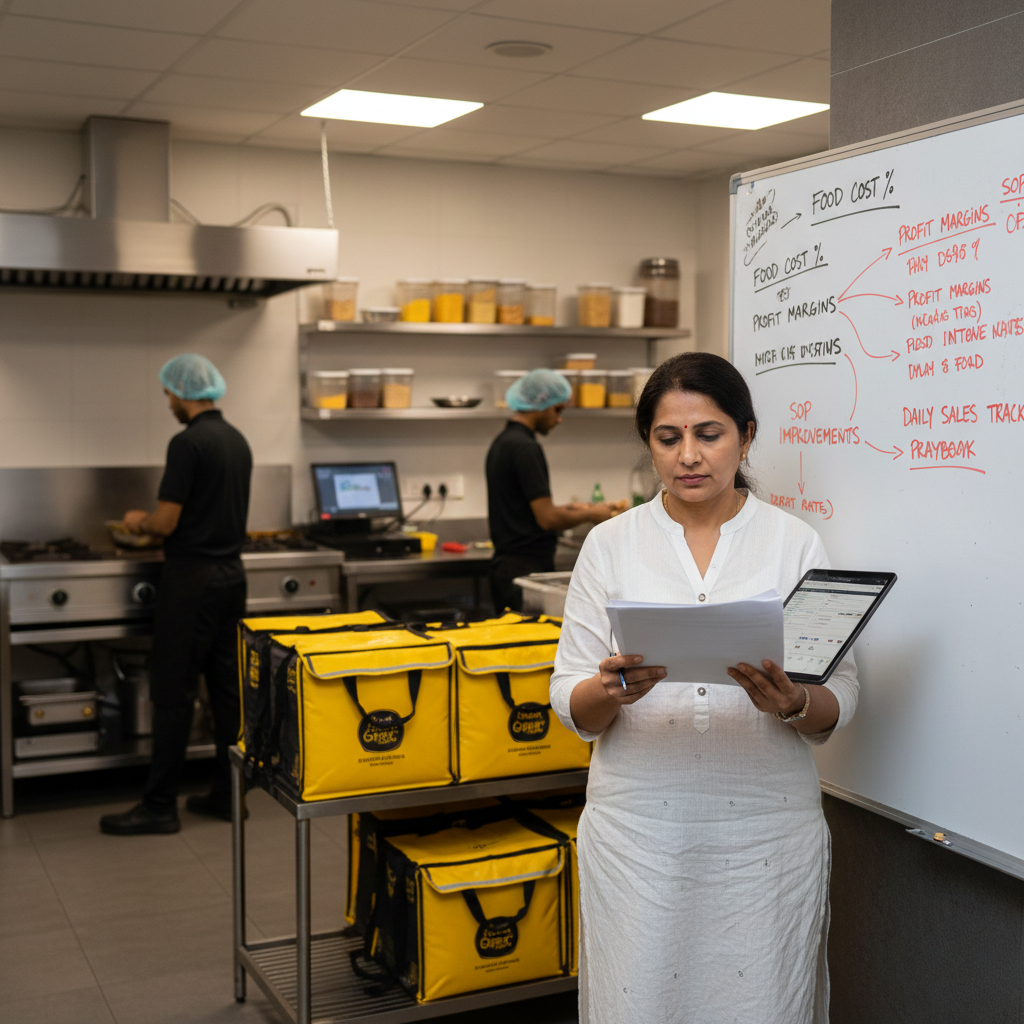

A deeper operational review revealed that delays were not caused by demand alone, but by poor pacing, unclear role ownership, and lack of real-time control—an insight many founders reach when growth starts damaging operations, as described in When Growth Is Hurting Your Cloud Kitchen Operations.

Cloud Kitchen Delivery Performance Case Study: Peak-Hour Performance Audit

The CKaaS intervention began with a detailed audit of peak-hour operations across thirty days. Orders placed during rush periods were analysed separately to isolate performance gaps masked by daily averages.

The audit revealed that more than sixty-five percent of peak-hour delays were caused by internal bottlenecks, particularly at the packing station and during rider coordination.

Cloud Kitchen Delivery Performance Case Study: Identifying Bottlenecks

Staff roles blurred during rush periods, prep pacing varied by individual judgment, and no one actively monitored SLA risk. Once delays began compounding, recovery became difficult.

These patterns are typical in founder-dependent kitchens, as explained in Founder-Dependent Kitchen Converted Into System-Driven Operations.

Cloud Kitchen Delivery Performance Case Study: CKaaS Control Systems

CKaaS introduced peak-hour-specific SOPs with clearly defined roles for order pacing, packing flow, and rider coordination. Prep buffers were adjusted dynamically to absorb demand spikes without breaching SLAs.

Orders approaching SLA risk were flagged operationally, allowing real-time corrections instead of reactive firefighting.

Operational Insight: Managing Peak Load Without Adding Manpower

One of the most important learnings from this Cloud Kitchen Delivery Performance Case Study was that peak-hour performance does not require additional manpower when systems are strong. Instead of increasing staff, the kitchen focused on improving flow efficiency and execution clarity.

Workstations were aligned to reduce movement, prep buffers were adjusted based on demand patterns, and responsibilities were clearly divided. This reduced idle time and prevented congestion during high-order windows.

By optimising how work was done rather than adding more people, the kitchen improved speed, reduced errors, and maintained consistency even at higher volumes. This approach made peak-hour operations scalable without increasing costs.

Cloud Kitchen Delivery Performance Case Study: Outcome and Results

Within sixty days, peak-hour SLA breaches reduced significantly. Average preparation time stabilised, rider wait times dropped, and delivery consistency improved across all brands.

Customer complaints declined, platform trust scores improved, and the kitchen handled higher peak-hour volume without operational stress.

Cloud Kitchen Delivery Performance Case Study: Key Takeaways

This case study proves that delivery instability during peak hours is not a manpower problem. It is a systems problem. With proper pacing, role clarity, and execution control, kitchens can scale peak-hour performance without sacrificing margins.

Related Case Studies and Reads

- How to Fix a Loss-Making Cloud Kitchen

- Why Discounts Are Not Solving Your Profit Problem

- From 50 Orders to 300 Orders: Operations Scaling Guide

- Standardizing Kitchen Execution Across Shifts

Have Questions?

If you want deeper clarity on peak-hour control, CKaaS systems, or delivery performance optimisation, detailed answers are available in the Grow Kitchen FAQs.