Why did my second cloud kitchen fail? is not a “bad luck” problem or a “new location didn’t work” problem. It is a replication + management bandwidth + unit-economics + systems-transfer problem. First kitchens often survive on founder presence, informal control, and daily firefighting. Second kitchens expose what wasn’t systemized: SOPs, training, prep planning, purchasing discipline, dispatch gates, margin control, and role ownership. When the second kitchen fails, it usually means you scaled volume without scaling control: inconsistent execution, weak team structure, leakier economics, delayed dispatch, higher refunds, and unclear accountability. This guide explains why second cloud kitchens fail in India and how to build a scale-ready operating system end-to-end using SOPs, station discipline, menu engineering, procurement routines, audits, and feedback loops using systems, not supervision.

Why Did My Second Cloud Kitchen Fail? The Real Reason “Scaling” Breaks What “Worked”

The first kitchen often feels like proof. You launched, struggled, stabilized, and started seeing orders come in. Even if profits were not perfect, the business felt alive. You learned the platforms, built a small team, figured out prep, and survived the daily chaos.

Then you opened the second kitchen with confidence. Same menu. Same brand. Same playbook. And yet within weeks or months you saw the opposite: more complaints, slower dispatch, inconsistent food, higher refunds, unstable ratings, vendor issues, staff churn, payout stress, and confusion about what exactly is breaking.

The hard truth: a second kitchen is not “copy paste.” It is a stress test of whether your first kitchen was running on systems or on you. If the first kitchen worked because you were constantly present, the second kitchen fails because you cannot be everywhere.

If you want the profitability foundation lens first, start with Cloud Kitchen Profitability Consultant in India and map recurring execution leaks using Common Operational Mistakes in Cloud Kitchens.

What “Second Kitchen Failure” Actually Means (Not Just “Location Problem”)

Most founders blame the second kitchen on location, rent, or “market fit.” Sometimes that is true. But most second-kitchen failures happen even when demand exists. Orders do come in. The issue is that the kitchen cannot deliver the same outcome consistently at the same cost structure.

A second kitchen fails in one of four visible ways: (1) operations collapse (late dispatch, chaos, wrong items), (2) quality consistency breaks (portions drift, taste varies, packaging fails), (3) economics get weaker (food cost rises, waste rises, discounts increase), or (4) management bandwidth snaps (no one owns standards, so standards decay).

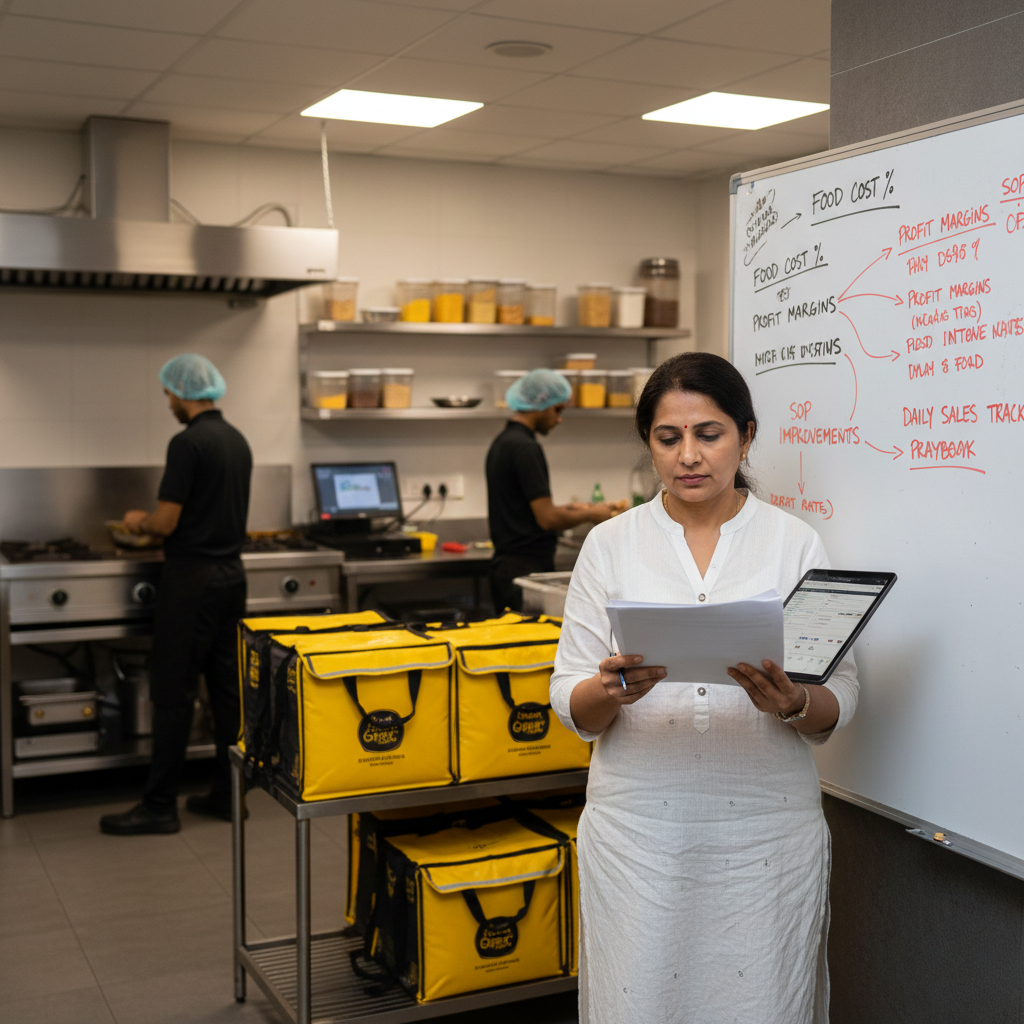

The most important mindset shift: your first kitchen taught you how to “run a kitchen.” Your second kitchen demands that you “run a system.” Systems are what make outcomes repeatable without your constant presence.

If your second kitchen failed quickly, it’s usually because replication happened at the surface level (same menu, same listing, same packaging) but not at the system level (same SOPs, same training, same prep planning, same gates, same KPIs, same audits).

The Unit Economics Lens: Your Second Kitchen Often Starts With Worse Economics Than Your First

A brutal reality: second kitchens often launch with weaker unit economics because they carry extra friction. New staff makes more mistakes. New vendors have inconsistent rates. New layout slows down stations. New dispatch behavior creates delays. And your attention is split, so leakage stays uncorrected longer.

That means you can have the same revenue but lower contribution margin. A practical order-level view is still the truth:

Order Value minus Aggregator commission & charges minus Packaging cost minus Food cost (COGS) minus Discount burn minus Refund/penalty leakage equals Contribution Margin.

If your second kitchen starts with higher refunds, higher waste, higher cancellations, or higher food cost percentage, growth will not save it. Growth will multiply its leakage.

If you want the payout-quality lens in detail, read Aggregator Commission Impact in India, and map refund/cancellation leakage using Refunds and Cancellations Impact on Cloud Kitchen Profitability.

The 14 Reasons Your Second Cloud Kitchen Failed (And What Each One Looks Like)

Second-kitchen failure rarely comes from one big mistake. It comes from multiple small gaps that become fatal when you are not physically present. Below are the most common reasons second kitchens fail in Indian delivery operations.

1) You replicated the menu, not the SOPs. Recipes may exist in someone’s head. But station SOPs (portion tools, packing rules, dispatch gates) were never documented. The new team “tries their best,” and output varies by shift.

2) You underestimated training time and error cost. New staff will make mistakes. Mistakes create refunds, remakes, and rating drops. If you didn’t design training as a system (shadow shifts, checklists, sign-offs), the kitchen bleeds quietly from day one.

3) Founder presence was the quality gate in Kitchen 1. In the first kitchen, you corrected portions, checked packing, and fixed dispatch. In the second kitchen, nobody replaced that gate. So standards decayed without anyone noticing until ratings dropped.

4) Station layout and workflow were slower than expected. A second kitchen often has different physical constraints: smaller packing table, awkward handover point, no labeling station, poor shelving. That increases order cycle time and creates chronic late dispatch.

5) Your dispatch system didn’t transfer. Many kitchens don’t have a real dispatch gate just a person “handing over.” Without a dispatch scan, add-on verification, and completeness check, wrong items and missing items become routine. Implement discipline using Cloud Kitchen Dispatch SOP.

6) Procurement became reactive, so food cost rose. New outlet means new vendor relationships and inconsistent rates. If you didn’t standardize RM specs, approved brands, and reorder routines, the second kitchen buys “whatever is available,” and COGS drifts upward.

7) Prep planning and par levels weren’t rebuilt for the new outlet. Stock-outs cause cancellations. Cancellations destroy trust and ranking. If the outlet lacks par levels and batch yield planning, availability becomes unstable during peak.

8) You scaled volume before stabilizing outcomes. Many founders push offers to “kickstart” the new outlet. But offers amplify weak systems. Volume reveals leaks faster than you can fix them, and the kitchen collapses under pressure.

9) Packaging and sealing rules weren’t standardized. The second outlet often uses different containers, tapes, or bagging style. Leakage rises, texture fails, and presentation suffers. Customers judge the received pack before the taste.

10) You launched too many SKUs, too soon. More SKUs increase error probability. Without tight categories, bestsellers, and simple execution, staff confusion grows and accuracy drops.

11) You didn’t install role ownership. “Everyone does everything” looks flexible but it kills repeatability. Prep, cook, pack, dispatch must have clear owners and gates. Otherwise output becomes mood-based. If you want the model, read Role-Based Kitchen Operations Explained.

12) You lacked a weekly feedback loop, so problems repeated. Complaints are data. Refund reasons are data. Late order counts are data. If you didn’t review them weekly and update SOPs, the same mistakes repeated daily.

13) Ratings and visibility dropped faster than you expected. New outlets are fragile. Early negative outcomes train the platform that the outlet is risky. Then impressions reduce, conversion drops, and you start discounting to survive. If you’re seeing “orders but poor visibility,” map the funnel logic using Marketing Spend vs ROI in Cloud Kitchens.

14) You didn’t standardize compliance and hygiene discipline. Small hygiene misses become customer trust issues in delivery: packaging cleanliness, sealing, labeling, and handling. Standardization is not “big-company stuff.” It is repeatability insurance.

For the core leak map, reference Common Operational Mistakes in Cloud Kitchens, and for the “systems vs supervision” mindset, use How Process Discipline Improves EBITDA.

Swiggy/Zomato Reality: Your Second Outlet Is Judged on Risk Signals, Not Your Founder Story

Aggregators do not care that you successfully ran one outlet. They evaluate each outlet as its own reliability profile: prep time, cancellations, refunds, late dispatch, complaints, ratings, and availability.

This is why second outlets fail faster than founders expect: small inconsistencies create more customer friction, friction creates refunds and low ratings, and the platform reduces distribution. Then the outlet becomes discount-dependent and margin collapses.

External policy context can help while mapping refunds and cancellations: Swiggy Refund & Cancellation Policy and Zomato Online Ordering Terms.

The actionable takeaway: treat outlet expansion as “risk reduction engineering.” Build a system where repeat failures cannot occur easily.

Second Kitchens Survive When Three Engines Are Stable: Prep Planning + Packing + Dispatch

Most second outlets don’t die because the food is terrible. They die because operational engines are unstable: prep planning fails (stock-outs, slow throughput), packing fails (leakage, missing items, wrong labels), and dispatch fails (late handover, cold food, queue chaos).

When these engines are stable, the outlet feels calm even at volume. When they are unstable, the outlet feels chaotic even at low volume.

Implement dispatch predictability using Cloud Kitchen Dispatch SOP, and reduce error repetition by mapping station-wise failures using Common Operational Mistakes in Cloud Kitchens.

Why Your Second Kitchen Needed Roles, Not “Extra Helpers”

In the first kitchen, you can patch gaps by personally stepping in. In the second kitchen, patching becomes impossible. So the operating model must shift from “help when needed” to “roles with gates.”

Here is what role-based stability looks like in a second outlet:

Prep role:

owns batch prep schedules, labeling, and par levels so peak readiness is predictable.

Cook role:

owns recipe cards, portion tools, and holding-time rules so taste and quantity don’t drift.

Pack role:

owns packing checklist, add-on verification, sealing rules, and clean presentation.

Dispatch role:

owns final scan, bag stability, correct labeling, and fast rider handover.

Manager role:

owns weekly review: refunds, cancellations, ratings, ETA, stock-outs and SOP upgrades.

If you want the full framework, use Role-Based Kitchen Operations Explained.

How to Prevent Your Next Second Kitchen Failure (A Practical 7 to 30 Day Rebuild Sequence)

If your second outlet failed, the goal is not to feel defeated. The goal is to extract a repeatable operating system before expanding again. Below is a practical rollout sequence used in multi-outlet kitchen networks.

Step 1 (Day 1–2): Write down what only you were doing in Outlet 1. List founder actions that kept standards stable: portion checking, packing checks, vendor follow-ups, escalation handling, staff corrections. If you can’t document it, you can’t replicate it.

Step 2 (Day 1–3): Build “non-negotiable SOPs” for the top 20% items. Start with your top-selling SKUs. Create portion tools, fill lines, sealing rules, labeling format, and add-on verification steps. Don’t SOP everything at once. SOP what creates most volume and most complaints first.

Step 3 (Day 2–5): Install a visible packing checklist + dispatch scan. This single gate reduces wrong items, missing items, add-on misses, and leakage. Implement via Cloud Kitchen Dispatch SOP.

Step 4 (Day 3–7): Rebuild prep planning with par levels and batch yields. Define top SKUs and set minimum buffer quantities. Build a peak readiness checklist so unavailability and cancellations do not spike.

Step 5 (Week 2): Simplify the menu for replication. Reduce near-duplicate SKUs. Create a “bestsellers” category and remove confusing variants. Replication is easier when execution is simple.

Step 6 (Week 2): Standardize procurement specs and approved vendors. Lock RM specs, approved brands, and purchase routines. Stop reactive buying. Reactive buying creates cost drift and quality inconsistency.

Step 7 (Week 3): Run audits, not policing. Audit 5 peak orders and 5 non-peak orders. Track station-wise errors: packing, dispatch, portion, labeling, sealing. Fix the top 2 repeating errors weekly.

Step 8 (Week 3–4): Install a weekly feedback loop that forces SOP updates. Every week, review: top refund reasons, cancellations, rating comments, late dispatch counts, and item unavailability. Then update SOPs and train staff again. No feedback loop = repeat failure forever.

If you want the unit-economics + growth discipline lens, map this with Marketing Spend vs ROI in Cloud Kitchens and How Process Discipline Improves EBITDA.

External process references (useful for standardisation thinking): Standardized Work (Lean lexicon), ISO 22000 overview, and FSSAI Hygiene Requirements (Schedule 4 reference).

Final Takeaway: Second Kitchens Fail When Replication Happens Without Transfer of Control

If your second cloud kitchen failed, it usually means the first kitchen was running on founder control, not on a transferable operating system. The second outlet exposes gaps in SOPs, training, procurement discipline, station gates, and role ownership.

Multi-outlet brands win when outcomes are repeatable: portions are stable, packing is checklist-driven, dispatch is gated, prep is planned, refunds and cancellations stay controlled, and weekly reviews force SOP upgrades. That is what makes expansion survivable.

Operating frameworks from GrowKitchen, and operating partner brands like Fruut and GreenSalad are built to convert “founder-driven kitchens” into “system-driven kitchen networks.”

FAQs: Why Did My Second Cloud Kitchen Fail?

What is the biggest reason second cloud kitchens fail?

Lack of transferable systems. The first outlet works with founder presence, but the second needs SOPs, roles, and gates.

Is second kitchen failure usually a location problem?

Sometimes, but most often it’s an execution and control problem: training, prep planning, dispatch discipline, and economics drift.

Which fix prevents repeat failure the fastest?

Packing checklist + dispatch scan, plus SOP-led portions for top sellers. These reduce refunds, wrong items, and late outcomes quickly.

How do I know if I’m ready to open a third kitchen?

When the first kitchen runs with stable outcomes without your daily presence, and your SOPs, training, and KPIs are repeatable.

- Cloud Kitchen Profitability Consultant in India

- Common Operational Mistakes in Cloud Kitchens

- Cloud Kitchen Dispatch SOP

- Role-Based Kitchen Operations Explained

- Refunds and Cancellations Impact on Cloud Kitchen Profitability

- Aggregator Commission Impact in India

- Marketing Spend vs ROI in Cloud Kitchens

- How Process Discipline Improves EBITDA

- Grow Kitchen on Twitter

Follow GrowKitchen on Facebook, LinkedIn, insights from Rahul Tendulkar, and ecosystem discussions via GreenSaladin.